Client Case: How AI can help the real estate industry

Our client needed smarter software. Not AI advice. Not a chatbot. Someone who could sit next to him, understand his work quickly, and turn that understanding into something that actually worked.

So that's what we did.

At Stackhavn, we are builders. Not a consultant writing recommendations. Not an agency waiting for a brief or an extra hand in an existing team. We join the daily work of business people, pick up the domain, and start building.

This is what that looked like for this project.

First: learn the work, not the requirements

We didn't start with a product specification. We started by watching.

We sat next to the valuator while he worked. We saw how many browser tabs were open. We watched him pull ownership data from Kadaster, building details from BAG, zoning information from the municipality, energy labels from RVO, climate risk maps, and recent comparable sales from separate platforms. All copy-pasted into a Word template.

Writing a (commercial) valuation report that should take hours took days. Not because the valuator was slow. Because the data was spread across too many systems and the right tooling simply didn't exist.

This is what an embedded engineer with a builder mindset can do: see the real problem and map it to working software. Requirements documents describe what people think they need. Watching someone work shows what they actually do.

Within days we understood the workflow, the pain points, and the regulatory constraints well enough to start designing.

Then: turn domain knowledge into decisions

Dutch property data is among the richest in Europe. It's also messy. Records conflict across registries. The BAG says one thing, the satellite image says another. WOZ values don't always match market observations.

An outside team would spend weeks just getting up to speed on this landscape. Because our engineer was already working alongside the expert, we could move straight into architecture decisions.

Connect all key Dutch property data sources into one interface. Show conflicting data transparently instead of hiding it, because in a regulated industry the appraiser needs to see the conflict to make a defensible judgement. Build an AI layer that works like a well-prepared junior colleague: it does the groundwork, the professional makes the call.

This is where our way of working pays off. About 80% of what we build is reusable across every client: infrastructure, AI framework, data pipelines, authentication, deployment. We call it the Stackhavn platform modules. The remaining 20% is domain-specific: the Kadaster integration, the valuation workflow, the regulatory logic. That's the part the embedded engineer builds together with the client.

Because the foundation was already there, we could focus all our time on the part that actually matters: making the product fit the valuator’s real workflow.

Next: build it together

With the domain understood and the architecture in place, we built the product in short cycles. Not in a separate office. Next to the domain expert.

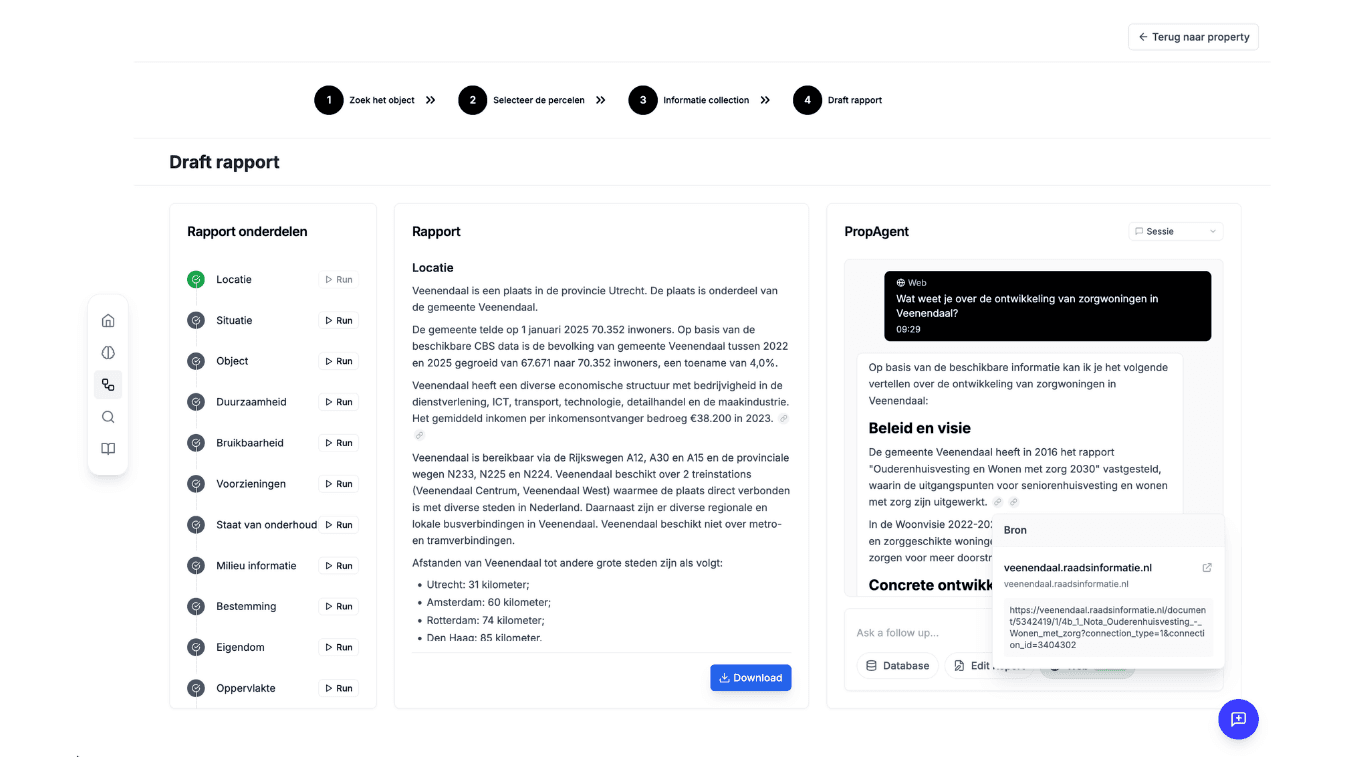

Data layer: Enter an address and the platform pulls parcel records, building specs, demographic CBS data, zoning plans, energy labels and visualization maps. One screen, all sources referenced.

AI agent: Not a chatbot. A structured assistant that guides the expert through the valuation process: data collection, document analysis, transcription from field inspections via voice, draft report sections with source references, and visualisations. Every data point is traceable. In regulated work, showing where a number came from isn't optional.

Workflow layer: This is the part most teams underestimate. The AI is the visible feature. The workflow determines whether it actually fits your specific business. We built a dashboard with draft valuations, team sharing, and a clear path from start to delivered report. Shaped around how the valuator actually worked, not how a process diagram said he should.

Why this way of working matters

Our model is built for a specific situation: a business with deep domain knowledge, a real operational problem, and no interest in a twelve-month software project.

We get going quickly. An embedded engineer starts producing from week one. Not because we skip steps, but because we don't waste time on handoffs, translation layers, and misunderstood briefs.

We learn the domain by working alongside you and build tailormade. The valuator didn't write specifications. He showed us his work. We asked questions, watched decisions, and translated what we saw into product choices. Together. By the end of week one our engineer could explain the difference between erfpacht and vol eigendom. That kind of context is what makes or breaks a domain specific AI product.

We turn problems into working solutions that solve problems. Not features from a document or just to do something with AI. We build solutions to bottlenecks we observed in the field. Fragmented data became a unified data layer. The auditability requirement became traceable AI. A messy real-world workflow became a flexible pipeline.

The foundation was already proven. The embedded engineer's job is to shape the last 20% so precisely around the client's domain that the product feels purpose-built.

What we took from this project

Watch before you build. The decisions that held up came from observation, not from requirements documents. An engineer who sits in the operation will always outperform a team working from a brief.

In regulated industries, auditability is part of the product. Showing your work isn't an afterthought. It's often the reason a professional can justify using the tool at all.

Generic AI is easy to dismiss. Specific AI is hard to ignore. There's a real difference between a tool that answers anything and one that knows which part of the process can or even is allowed to be automated. That specificity only comes from being close to the domain.